Virtualization, Cloud, Infrastructure and all that stuff in-between

My ramblings on the stuff that holds it all together

8 Node ESXi Cluster running 60 Virtual Machines – all Running from a Single 500GBP Physical Server

I am currently presenting a follow-up to my previous vTARDIS session for the London VMware Users Group where I demonstrated a 2-node ESX cluster on cheap PC-grade hardware (ML-115g5).

The goal of this build is to create a system you can use for VCP and VCDX type study without spending thousands on normal production type hardware (see the slides at the end of this page for more info on why this is useful..) – Techhead and I have a series of joint postings in the pipeline about how to configure the environment and the best hardware to use.

As a bit of a tangent I have been seeing how complex an environment I can get out of a single server (which I have dubbed v.T.A.R.D.I.S: Nano Edition) using virtualized ESXi hosts, the goals were;

- Distributed vSwitch and/or Cisco NX100V

- Cluster with HA/DRS enabled

- Large number of virtual machines

- Single cheap server solution

- No External hardware networking (all internal v/dvSwitch traffic)

The main stumbling block I ran into with the previous build was the performance of the SATA hard disks I was using, SCSI was out of my budget and SATA soon gets bogged down with concurrent requests which makes it slow; so I started to investigate solid state storage (previous posts here).

By keeping the virtual machine configurations light and using thin-provisioning I hoped to squeeze a lot of virtual machines into a single disk, previous findings seem to prove that cheap-er consumer grade SSD’s can support massive amount of IOps when compared to SATA (Eric Sloof has a similar post on this here)

So, I voted with my credit card and purchased one of these from Amazon – it wasn’t “cheap” at c.£200 but it will let me scale my environment bigger than I could previously manage which means less power, cost, CO2 and all the other usual arguments you try to convince yourself that a gadget is REQUIRED.

So the configuration I ended up with is as follows;

| 1 x HP ML115G5, 8Gb RAM, 144Gb SATA HDD | c.£300 (see here) but with more RAM |

| 1 x 128Gb Kingston 2.5” SSDNow V-Series SSD | c£205 |

I installed ESX4U1 classic on the physical hardware then installed 8 x ESXi 4U1 instances as virtual machines inside that ESX installation

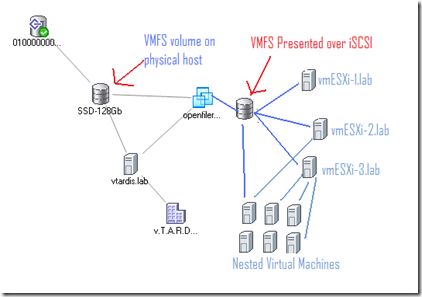

This diagram shows the physical server’s network configuration

In order for virtualized ESXi instances to talk to each other you need to update the security setting on the physical host’s vSwitch only as shown below;

This diagram shows the virtual network configuration within each virtualized ESXi VM with vSwitch and dvSwitch config side-side.

I then built a Windows 2008R2 Virtual Machine with vCenter 4 Update 1 as a virtual machine and added all the hosts to it to manage

I clustered all the virtual ESXi instances into a single DRS/HA cluster (turning off admission control as we will be heavily oversubscribing the resources of the cluster and this is just a lab/PoC setup

Cluster Summary – 8 x virtualized ESXi instances – note the heavy RAM oversubscription, this server only has 8Gb of physical RAM – the cluster thinks it has nearly 64Gb

I then built an OpenFiler Virtual Machine and hooked it up to the internal vSwitch so that the virtualized ESXi VMs can access it via iSCSI, it has a virtual disk installed on the SSD presenting a 30Gb VMFS volume over iSCSI to the virtual cluster nodes (and all the iSCSI traffic is essentially in-memory as there is no physical networking for it to traverse.

Each virtualized ESXi node then runs a number of nested virtual machines (VM’s running inside VMs)

In order to get Nested virtual machines to work; you need to enable this setting on each virtualized ESXi host (the nested VM’s themselves don’t need any special configuration)

Once this was done and all my ESXi nodes were running and settled down, I have a script to build out a whole bunch of nested virtual machines to execute on my 8-node cluster. the VM’s aren’t anything special – each has 512Mb allocated to it and won’t actually boot past the BIOS because my goal here is just to simulate a large number of virtual machines and their configuration within vCenter, rather than meet an actual workload – remember this is a single server configuration and you can’t override the laws of physics, there is only really 8Gb or RAM and 4 CPU cores available.

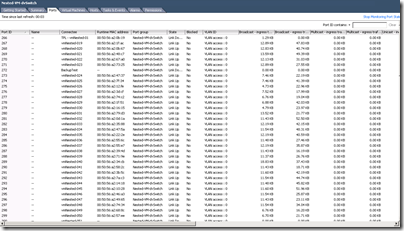

Each of the virtual machines was connected to a dvSwitch for VM traffic – which you can see here in action (the dvUplink is actually a virtual NIC on the ESXi host).

I power up the virtual machines in batches of 10 to avoid swamping the host, but the SSD is holding up very well against the I/O

With all 60 of the nested VMs and virtualized ESXi instances loaded these are the load stats

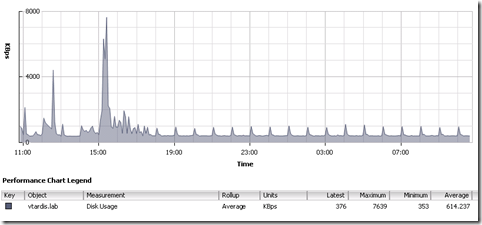

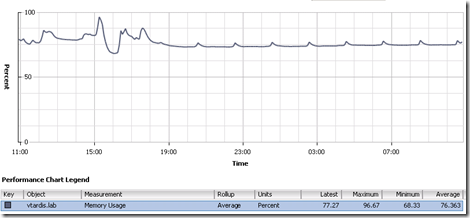

I left it to idle overnight and these are the performance charts for the physical host; the big spike @15:00 was the scripts running to deploy the 60 virtual machines

Disk Latency

Physical memory consumption – still a way to go to get it to 8Gb – who says oversubscription has no use? 🙂

So, in conclusion – this shows that you can host a large number of virtual machines for a lab setup, this obviously isn’t of much use in a production environment because as soon as those 60VM’s actually start doing something they will consume real memory and CPU and you will run out of raw resources.

The key to making this usable is the solid state disk – in my previous experiments I found SATA disks just got soaked under load and caused things like access to the VMFS to fail (see this post for more details)

Whilst not a production solution, this sort of setup is ideal for VCP/VCDX study as it allows you to play with all the enterprise level features like dvSwitch and DRS/HA that really need more than just a couple of hosts and VMs to understand how they really work. for example; you can power-off one of the virtual ESXi nodes to simulate a host failure and invoke the HA response, similarly you can disconnect the virtual NIC from the ESXi VM to simulate the host isolation response.

Whilst this post has focused on non-production/lab scenarios it could be used to test VMware patch releases for production services if you are short on hardware and you can quite happily run Update manager in this solution.

If you run this lab at home it’s also very power-efficient and quiet, there are no external cables or switches other than a cross-over cable to a laptop to run the VI Client and administer it; you could comfortably have it in your house without it bothering anyone – and with an SSD there is no hard disk noise under load either 🙂

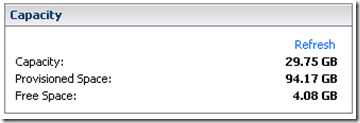

Thin-provisioning also makes good use of an SSD in this situation as this screenshot from a 30Gb virtual VMFS volume shows.

The only thing you won’t be able to play around with seriously in this environment is the new VMware FT feature – it is possible to enable it using the information in this post and learn how to enable/disable but it won’t remain stable and the secondary VM will loose sync with the primary after a while as it doesn’t seem to work very well as a nested VM. If you need to use FT for now you’ll need at least 2 physical FT servers (as shown in the original vTARDIS demo)

If you are wondering how noisy it it at power-up/down TechHead has this video on YouTube showing the scary sounding start-up noise but how quiet it gets once the fan control kicks-in.

Having completed my VCP4 and 3 I’m on the path to my VCDX and next up is the enterprise exam so this lab is going to be key to my study when the vSphere exams are released.

Pingback: My first VMUG « Virtualised Reality

Hey there,

You mention you use the SSD, but I’m curious to know if you’ve had failures with it? There’s a bit of huff and puff about how many writes and rewrites etc. before an SSD should die, so I’m guessing yours would have a lot. How long do you expect it to live?

I did have one SSD die – it was a Transcend one, but I think that was just bad luck, rather than over-use, as it was only lightly used in a laptop at the time – biggest problem for me was that the failure was subtle, no clicking or whirring like you get with a traditional spinning disk.

my 2nd SSD – 128Gb Kingston one has been running fine for nearly a year now without issue.

Pingback: Home Lab Update « Virtualised Reality

Pingback: Career vMotion « Virtualization, Windows, Infrastructure and all that stuff in-between

Pingback: VMworld 2010 SF – Day 1 « Virtualization, Windows, Infrastructure and all that stuff in-between

Pingback: Top Virtualization Blog Voting Time « Virtualization, Windows, Infrastructure and all that stuff in-between

Pingback: VMware Express – The Challenge – Mark Vaughn's Weblog

Pingback: Distributed Power Management (DPM) for your Home Lab « Virtualization, Windows, Infrastructure and all that stuff in-between

Pingback: vTARDIS wins Best of Show at VMworld Europe 2010 « Virtualization, Windows, Infrastructure and all that stuff in-between

Pingback: High Spec Desktop / Server

hi

i have a question, when you are using virtual esxi hosts, how does vmotion work? i was told guest vm’s have to use 32bit operating systems is that correct?

i have tried to vmotion 64bit os but i keep getting incompatible cpu errors relating to longmode.

i cant seem to find a way to enable vt mode on virtual esxi hosts…im using esxi 4.1.

any help would be appreciated.

thanks

Thanks for your comment and sorry for the delay in replying;

vMotion works fine in my environments between guest VMs running on nested ESXi hypervisors – just make sure you have the storage networks and vmotion networks mapped correctly (see my posts for some screenshots)

Guest VMs can only be 32-bit at the moment, yes that is correct.

the virtual ESXi hosts won’t be able to see the VT/AMD-V functionality so won’t give you a 64-bit nested VM.

thank you for the clarification

on a seperate note what would you recommend for a iscsi storage device?

i was thinking to get a qnap nas which supports iscsi, but then i found a dual core amd pc.

i was thining to add 2 nics to the pc and then install starwind on to my os.

i think this would be a cheaper and reliable option.

the pc has 2 x 1TB seagate disk, so disk space is not a issue.

do you think this is a good idea?

PC based approach is cheaper if you have the hardware and there are plenty of open-source/free NAS downloads, I’ve done the same in the past with OpenFiler

YOu can use StarWind but you’ll also need Windows on the box, I found performance was generally ok when I did that – but it was a pain whenever I needed to patch/reboot the Windows host.

maybe check out this – https://vinf.net/2011/01/11/building-a-fast-and-cheap-nas-for-your-vsphere-home-lab-with-nexentastor/ which has plenty of bells and whistles to play about with

HTH

I’m all excited until I realised that none of the nested VM is really doing anything.. 😀

I did the same thing a while back on my notebook, no SSD. Just the build in 2.5inch SATA. (Wish the price of SSD would just drop….)

Win7 x64 with VMware Workstation 7.1

i7 with 8GB ram.

2x virtual ESXi

2x virtual ESX

1x W2008 x64 with VCenter

1x Virtual SAN (iSCSI)(Demo license)

1x Nested W2008 x32

1x Nested W2003 x32

Even managed to Vmotion and Storage Vmotion across the virtual ESXi.

haha yeah they’re not doing a lot – but enough to acheive the goal and you cannae defy the law of physics /scotty.

I have had some success at a smaller scale booted Damn Small Linux in the nested ESXi hosts – 50mb and can boot from an .iSO file

And now the stupid question of newbie for 2011 !

What is the limit if I use an esxi version instead of vsphere classic for a vTARDIS like at home ?

You can use ESXi (ESX without service console)

if you mean the free version of ESXi (Rather than a trial license or proper license) http://blogs.vmware.com/vsphere/2010/07/introducing-vmware-sphere-hypervisor-41-the-free-edition-of-vmware-vsphere-41.html

Won’t be able to to use vCenter, clustering, HA etc. (so pretty much all the useful things you want for learning)

you can still run VMs and nested VMs with the free version but the higher level functionality is missing

HTH

Hi

Firstly thank you for all your great advice.

I am a stumbling block, prob due to my network skills being really crap lol

ive got vesxi set up and nested vm’s but im having trouble with network setup.

i would like to seperate vm traffic for iscsi and vmotion, but im not sure how to do this.

as i tried a few things, but nothing worked.

are there any sites, which have a step by step guide on setting up vsphere networking and seperating network traffic.

my physical host has 4 nics within it, not sure how i should set things up.

any help any one pleaseeeee lol

Just purchased a HP ML350G6 dual core 5640 8GB RAM as my primary ESXi server. What would be your suggestion as to a minimum HP server for the second one?

main thing to look for is another box that has a very similar CPU to allow you to vMotion between the two and that it will run ESX.

Pingback: Countdown to the VCP4 | Thulin' Around

Great article… one question though.. How can you run 8 ESXi hosts with 2GB memory each on a host with only 8GB? I’m slightly confused! I have a HP ML110 G6 with 9GB which I’m planning on running 8 ESXi 5 (2GB each) hosts, 1 vCentre WIN2K8 R2 (2GB).This totals 18GB so how is this possible? Cheers!

memory oversubscription – which is a built-in ESX feature… http://blogs.vmware.com/virtualreality/2008/10/memory-overcomm.html combine that and compression (v4.1+ only) and it’s great)

Thanks for that! 🙂 I notice that I don’t have to do anything to enable memory overcommit?

Pingback: VMware vSphere Home Lab – “The Green Machines” | Webnetin

I’m very interested in doing a vTardis on a laptop as I see alluded to on The Register article below. I would like to use Windows Server 2012 as the host though (free license through DreamSpark). What is special about Windows 8 that enables this and is the same feature present in Windows Server 2012?

http://www.theregister.co.uk/2014/02/12/home_lab_operators_ditch_your_servers_now/

Nothing special about Windows 8, other than the laptop shipped with the license 🙂 Windows Server 2012 should be able to function the same, as long as you can get the wireless network & power management support working then you should also be able to take advantage of the NFS server in Windows Server.

Happy building.